Mobile apps are more popular than ever nowadays.

The Google Play Store hosts a whopping 2.8 million apps available for download, whereas Apple’s App Store boasts nearly 2 million of them.

However, all these apps didn’t appear overnight. Building an app is a time-consuming effort, which requires testing to ensure your users like it, that it performs well under pressure, etc.

At first glance, it seems like a large project.

However, don’t worry—that’s what we’re here for. In this article, we’ve listed some tips that should significantly improve your mobile app testing process.

Table of Contents

Create a strategy for testing different app types

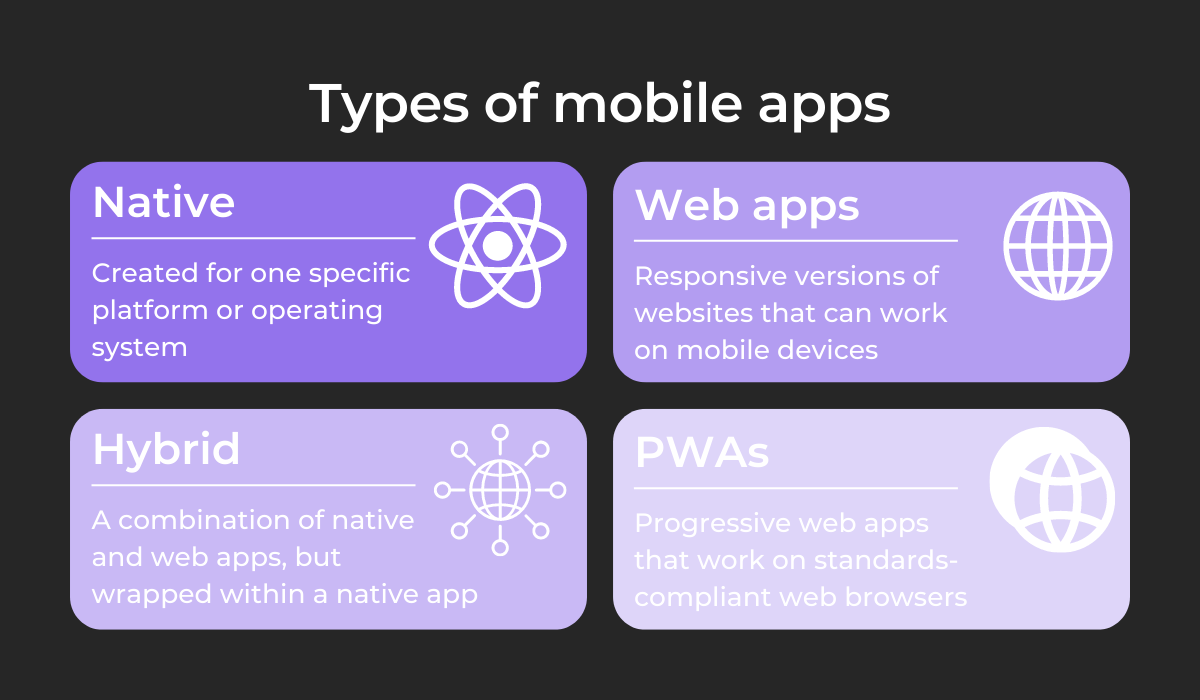

There’s no universal formula for building apps. There are multiple different types of apps, and each has its own specifications, requiring different approaches during testing.

As such, creating a strategy for testing each app variant is a good idea.

The four mobile app types are shown below:

Although native and hybrid apps utilize different underlying technologies, they are still functionally quite similar. As such, the testing approach can be the same.

In general, functional testing should be your focus. You want to try out built-in resources such as camera and location, screen orientation, and gesture testing.

Furthermore, considering the countless devices now available on the market, compatibility testing is necessary.

Get unreal data to fix real issues in your app & web.

Connectivity testing is also helpful for testing different connection types and how the app operates without a connection.

When conducting tests, you’d do well to invest in a framework to assist you. For example, Selendroid will greatly facilitate testing both native and hybrid apps.

The video below shows a native app testing demo:

As you can see, the tool is an undeniable asset with native testing.

However, it’s also useful for hybrid app testing:

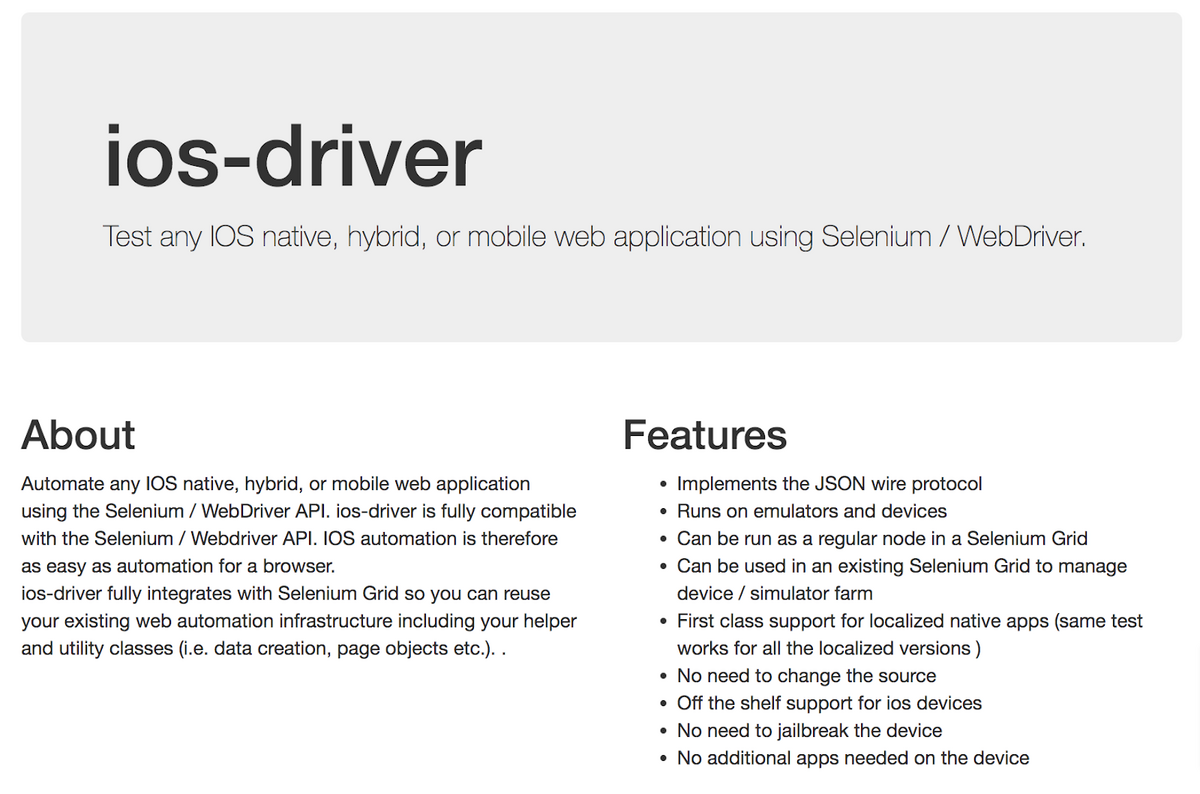

Although this framework is designed for Android, there is also an iOS variant called Selenium—just ensure you use it in tandem with the iOS driver.

Here’s what the tool offers:

With these resources, you’ll have an easy time testing native and hybrid apps on both Android and Apple platforms.

Moving on to web apps and PWAs, given the web integration, you should conduct trials of these apps on the following:

- Visual user interface

- Cellular data use

- App performance

- Responsiveness

- Connectivity issues

- Battery usage

- Accessibility

- Discoverability

All of these elements are essential in a browser environment. For example, web apps rely heavily on transferring data from a server, which is why cellular data use is critical.

Likewise, extensive use of JavaScript can cause battery drainage.

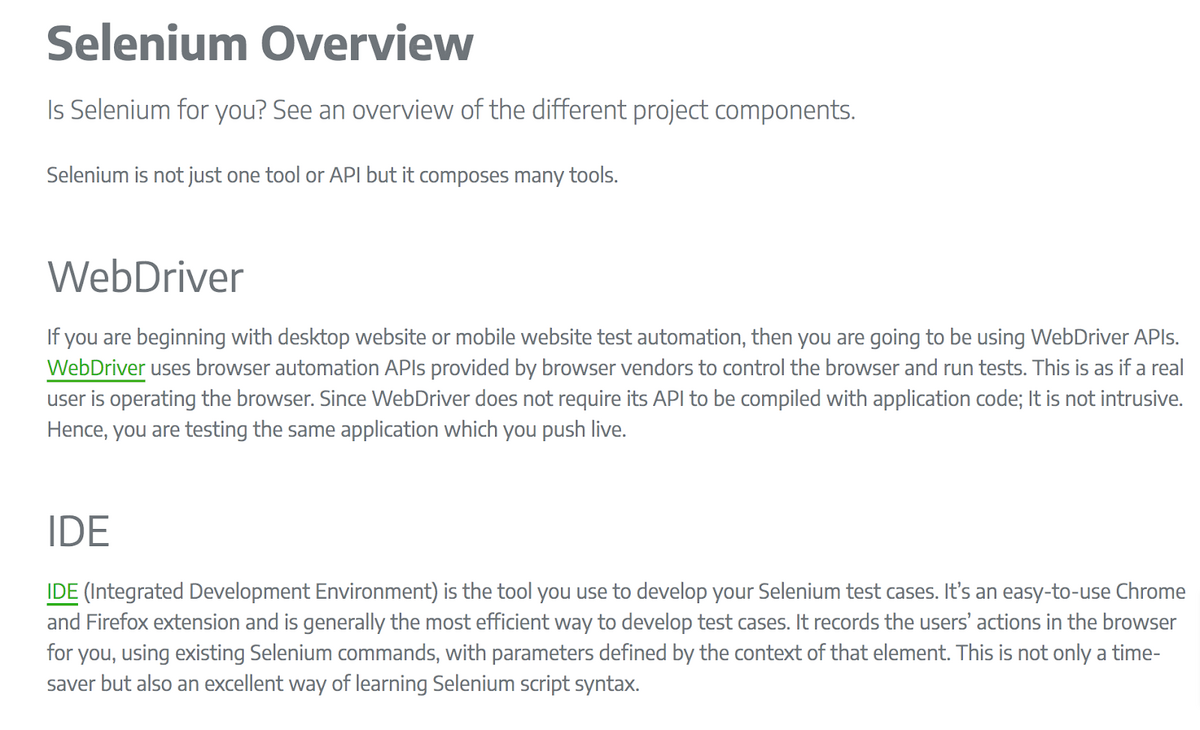

Luckily, there are also tools for web apps and PWAs testing. The aforementioned Selenium is highly popular, as this framework can automate tests against different browsers and platforms.

Take a look:

With Selenium, you can easily automate your testing and quickly and efficiently trial all your web apps and PWAs.

With this arsenal of tools and app knowledge, you should be able to devise an efficient and effective strategy for each mobile app type.

Begin using the QAOps framework

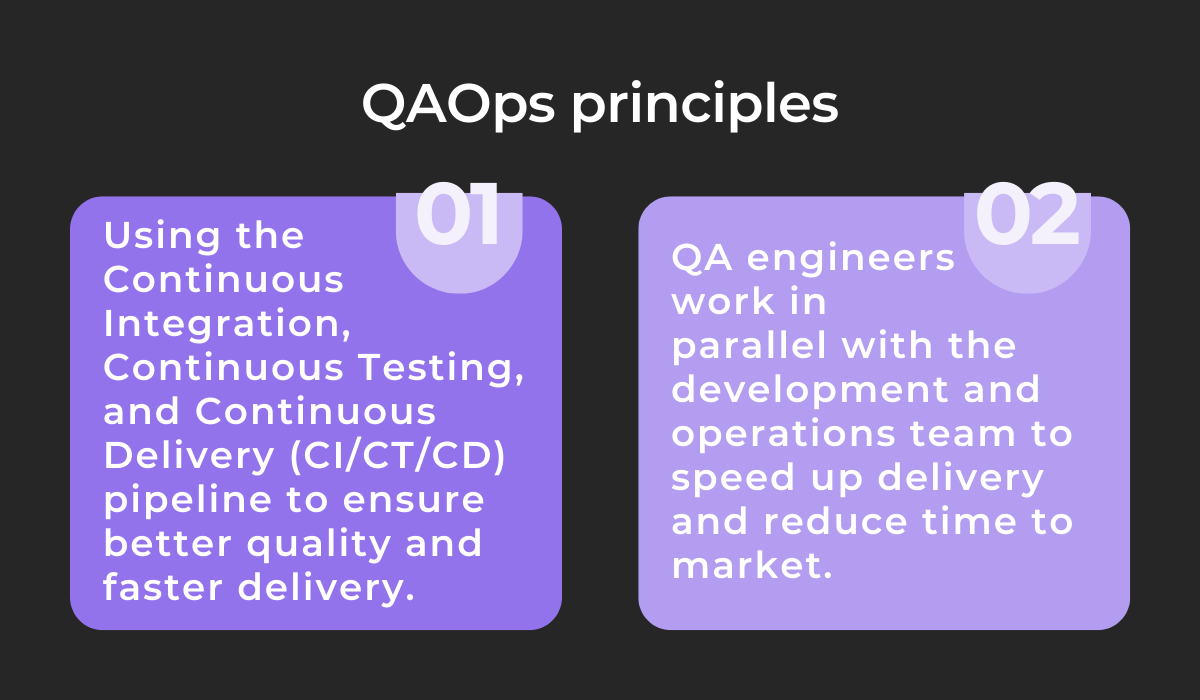

You’ve undoubtedly heard of QA and have likely worked with DevOps, but have you ever encountered QAOps?

The framework combines QA testing with DevOps processes, creating feedback loops to improve testing efficiency.

Traditionally, QA testing is an isolated activity carried out exclusively by testers during post-development, which can impede releases and development-tester communication.

QAOps emerged as a better alternative, as the framework breaks down these silos and integrates QA procedures into the CI/CT/CD pipeline.

In a nutshell, QAOps is based on two main principles:

These two maxims are the core of QAOps—the framework relies on continuous collaboration between teams and constant adaptability to new developments.

To achieve this, QAOps consists of four primary practices:

Automated testing capitalizes on modern software tools to decrease human involvement in testing, saving a significant amount of time.

For example, Appium is an online tool designed precisely for testing automation:

This tool is a huge asset, as the QA tester won’t have to perform every single test manually, capitalizing on time and money.

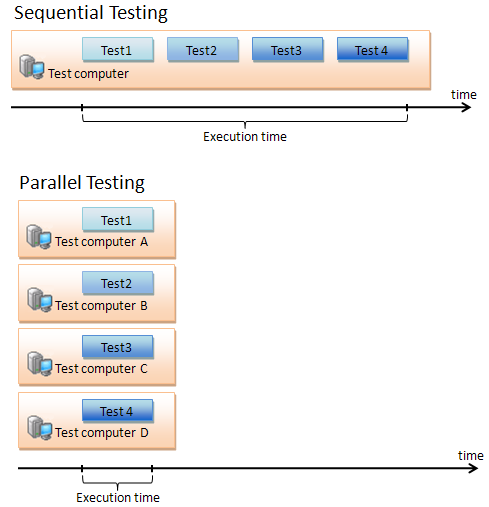

Another tactic for accelerating testing effectiveness is parallel testing.

Sequential testing isn’t the most effective, as the tester must always wait for each test to finish. Instead, why not run tests simultaneously, and gather results at the same time?

The testing process will automatically be accelerated, as this graph shows:

Parallel testing significantly reduces execution time, speeding up the testing process instantly.

Scalability testing is another crucial factor.

Once the software is live, you’ll need to examine its performance under various loads, checking if it still works correctly regardless of user request volume.

In sum, scalability testing evaluates how well the app handles traffic.

Finally, the most crucial aspect of QAOps is integrating QA with DevOps.

One possible approach is to instruct your developers to write test cases and then have the DevOps team locate potential issues with the help of the QA team.

That way, all groups work together and can better understand each department’s tasks and processes.

Keep on top of performance testing

How will your mobile app function when there’s a sudden influx of users? Will it still operate smoothly when other apps are running?

Your app should still run seamlessly in the above scenarios, regardless of the difficult conditions.

To ensure this, you’ll need to keep on top of performance testing—testing that evaluates how the app performs under different workloads.

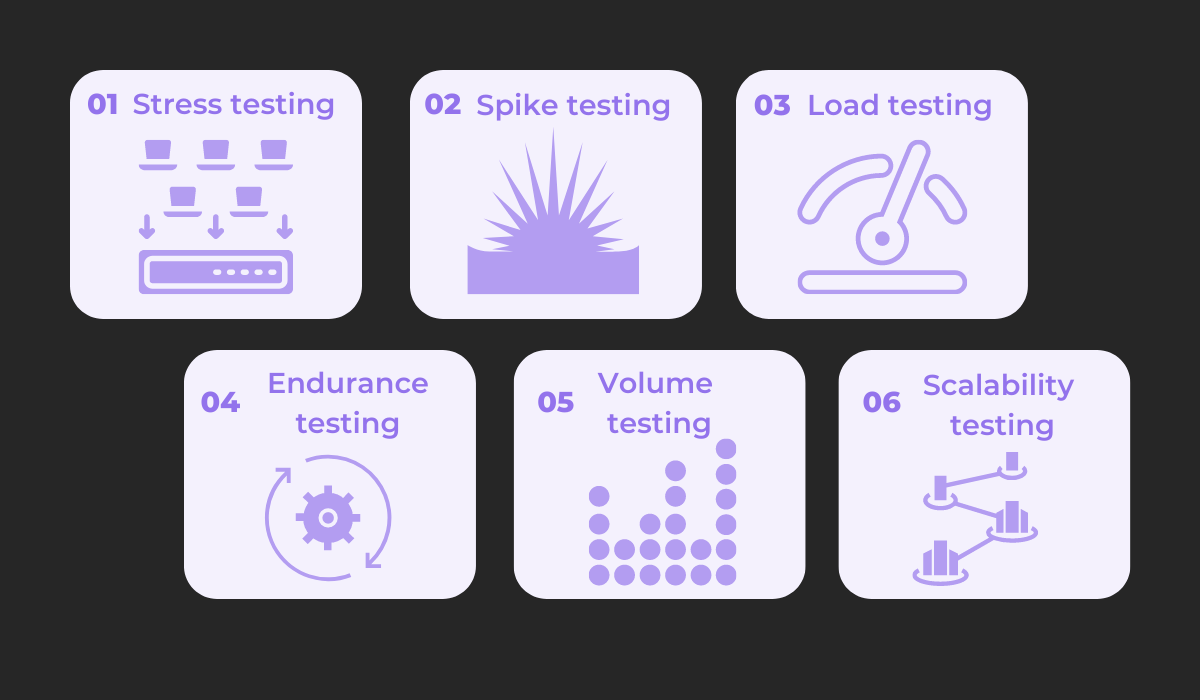

There are six performance testing variants depicted here:

Stress testing, also known as fatigue testing, judges how well the app will operate in unusual user conditions.

It is chiefly utilized to uncover the limit at which the app will malfunction and crash.

Similarly, spike testing measures the app’s performance when subjected to sudden substantial volume increases, e.g., if a massive number of users log on unexpectedly at the same time.

With load testing, you observe the software’s functionality under various load levels, i.e., the number of users using the app simultaneously.

This testing variant is primarily focused on response time and system stability.

Endurance (or soak) testing dissects the app’s performance over prolonged periods of average load. The main objective is to unearth memory leaks.

Volume testing, otherwise known as flood testing, measures how the application deals with large data quantities.

This testing type checks if the app will be overwhelmed and what fail-safes to implement.

Finally, scalability testing examines how an application will handle increasing amounts of load and processing. This is done by incrementally adding to the load volume.

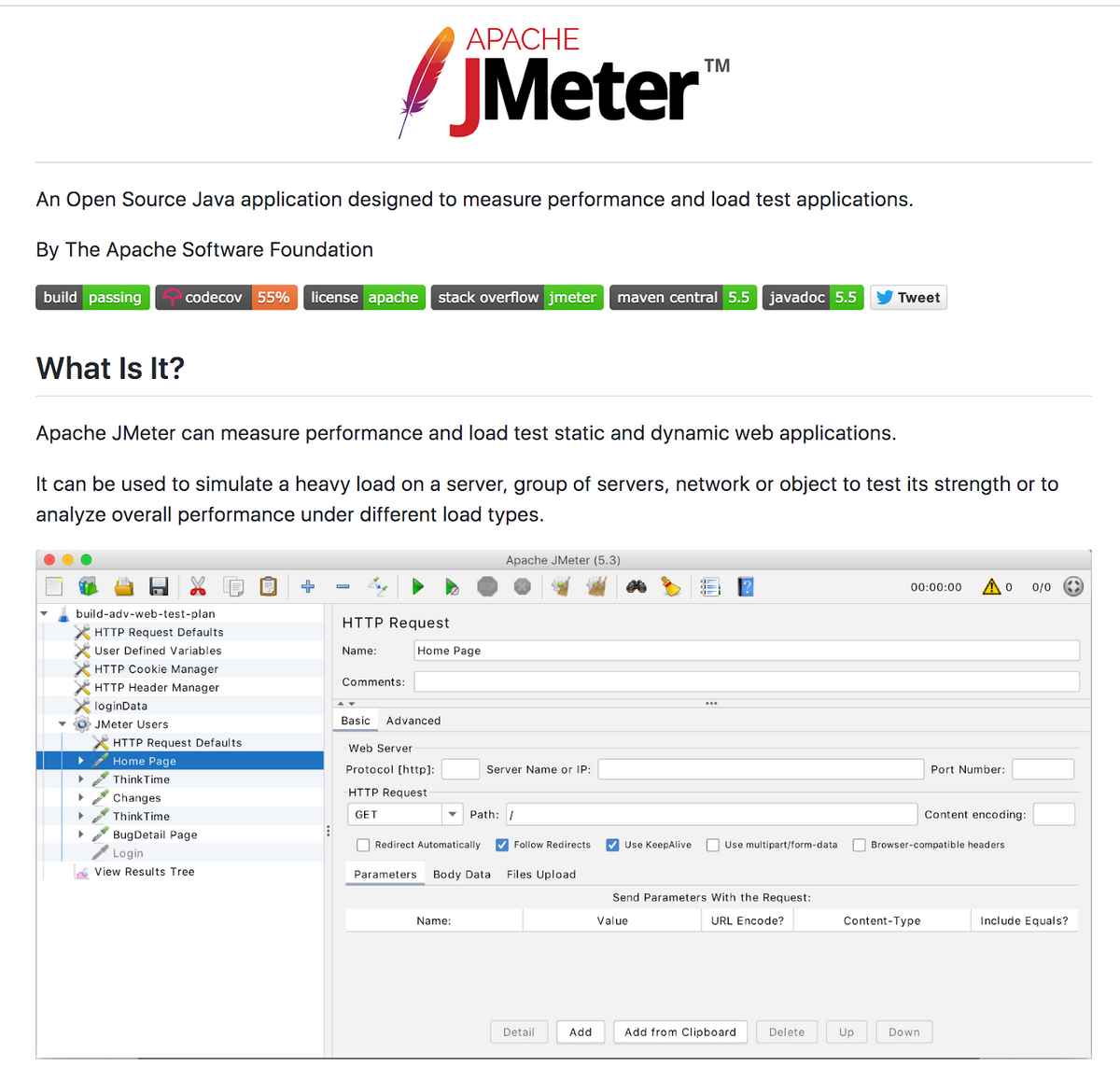

This is a lot to cover, but luckily, there are online resources to assist you. Jmeter by Apache is the most popular open-source tool with a wide range of features.

Here’s its ReadMe:

The tool even includes multiple load generators and controllers, ideal for performance testing.

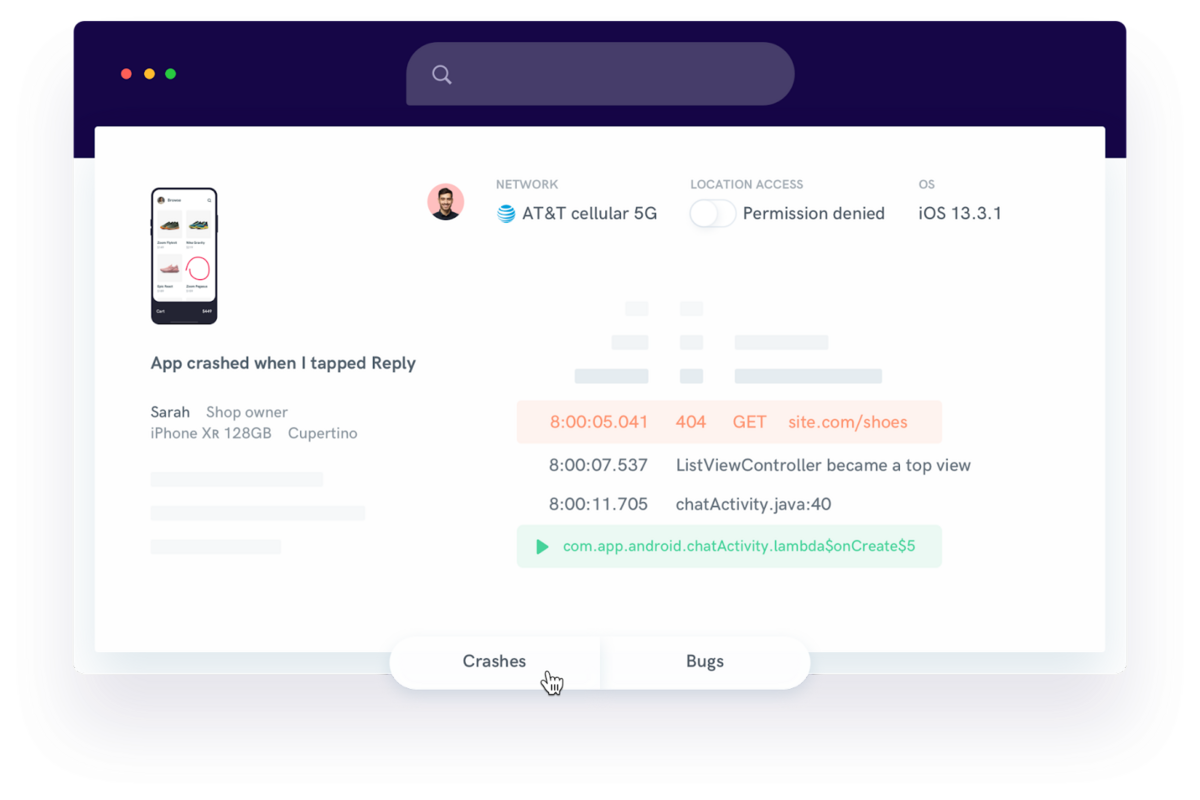

Another helpful tool for tracking performance testing is Shake. This resource automates bug and crash reporting, instantly generating a report detailing the problem’s specifications.

Here’s an example:

As you can see, the report contains everything developers need: screenshots, screen recordings, steps to reproduce the error, app version, etc.

This dramatically increases the chances of finding the source of the problem and helps the developers stay on top of performance testing.

Make time for app regression testing

Although performance testing is non-negotiable, regression testing is equally important. Imagine if you released a new app version, and suddenly users couldn’t make purchases.

Regression testing is designed to avoid such unpleasant situations—it ensures that new app versions won’t disable or break any functionalities from previous versions.

In an ideal world, each new version release should undergo regression testing.

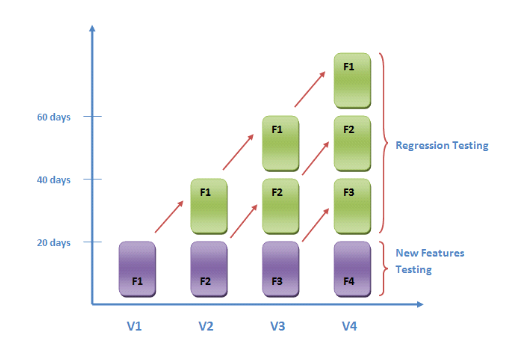

The graph below is an elegant visualization of regression testing:

As you can see, as your app grows, regression testing will be continuously required. That way, you can ensure older features still function correctly and no new issues arise.

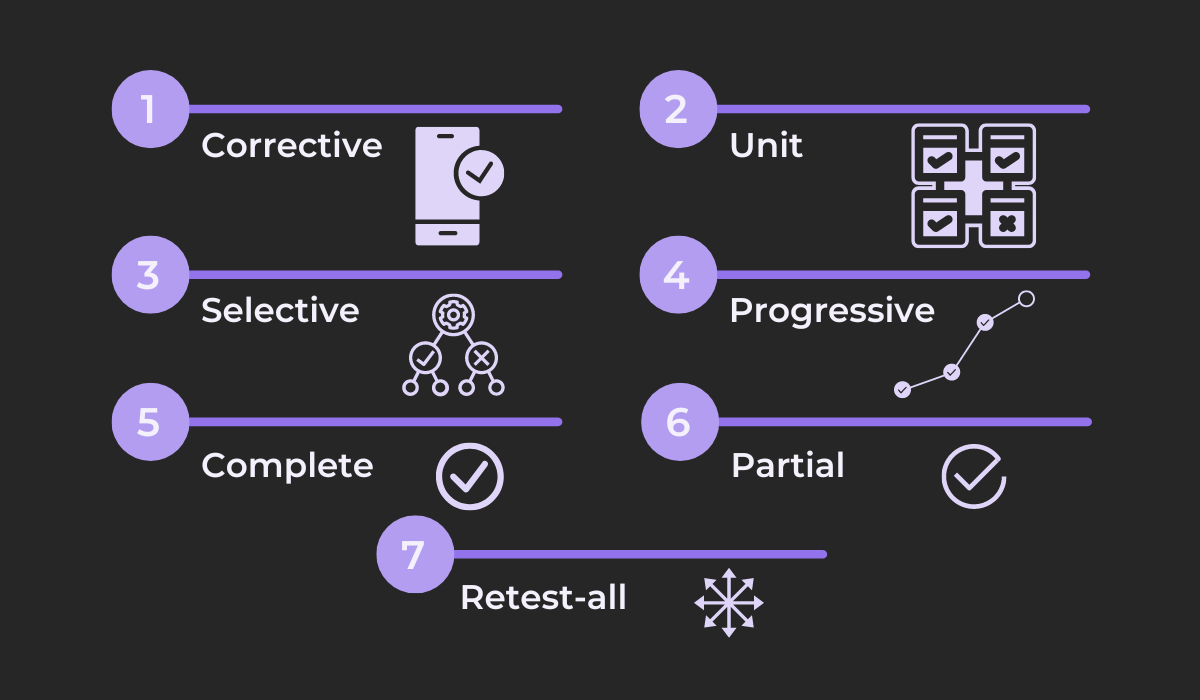

There are seven regression testing types, shown below:

Corrective testing is simple—you don’t change the codebase or add any new functionalities. Instead, you simply test existing features and their accompanying test cases.

However, unit testing is entirely focused on the current codebase. The code is tested in isolation, with all other interactions, integration, and dependencies disabled.

Selective testing takes this one step further and analyzes the effect of both new and already-existing code elements together.

Once there are new test cases and changes in program specifications, you’ll want to perform progressive testing.

This testing is essential for ensuring new software doesn’t compromise existing functionalities.

If there are significant changes to the current code, complete testing is your best bet, as it provides a comprehensive view of the entire system.

Contrary to this, partial testing has the new code addition interact only with the existing codebase, focusing exclusively on new code inclusions.

Finally, the most exhaustive testing is the retest-all approach. This kind executes all test cases once more to be absolutely sure that there are no new bugs.

Given the scope of all these testing types, it’s well worth investing in a tool for assistance.

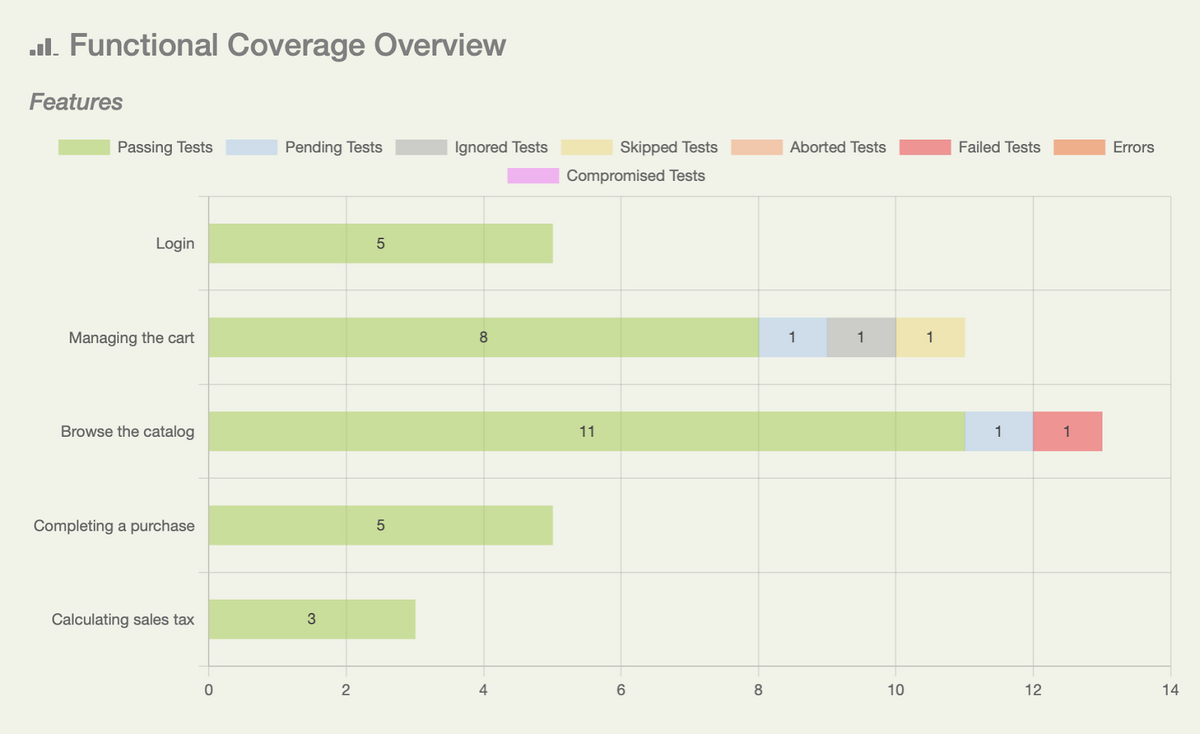

For example, Serenity is an open-source framework that generates extensive test results and informative visuals.

Here’s one sample:

With this resource, you’ll have a clear overview of what’s been tested, what hasn’t, and what features need correcting. It’s an invaluable tool that will significantly facilitate regression testing.

Perform user acceptance testing

Ultimately, the best metric for measuring an app’s success is whether users enjoy it.

You could have a perfectly functioning, performance-optimized native app built in record time, but that won’t mean much if your end users are dissatisfied.

For this reason, it’s essential to organize user acceptance testing.

This testing type is typically performed by the users themselves and is a superb opportunity to unearth any usability issues you haven’t noticed.

Who knows—they might even discover technical bugs.

For example, take a look at this statistic:

You may think the app loads quickly enough. After all, you’ve spent months working on it and won’t notice the slow speed.

However, users accustomed to WhatsApp and Instagram’s response time will definitely notice any lagging and can therefore provide valuable feedback essential for improving your app.

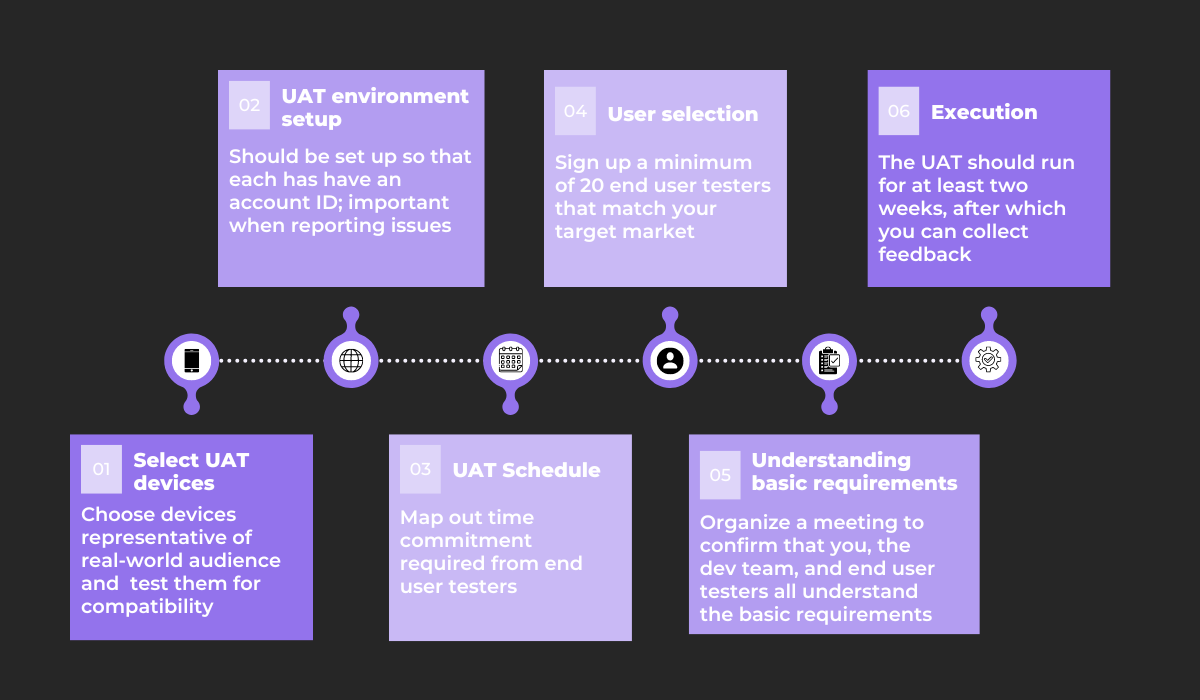

If you’re unsure how to begin user acceptance testing, try the following approach:

Your first step is to choose your UAT mobile devices. Ensure that they represent your real-world target audience, and run compatibility checks beforehand.

Once that’s done, enable the testing environment, providing all testers with unique account IDs.

Following that, construct a UAT schedule outlining exactly how much time you’ll require from the users.

With that solved, you can select your end-user testers. A minimum of 20 is recommended, and their demographics and interests should match your target market.

It’s then a good time to host a meeting where you can impart the app’s basic requirements to the end users. This will aid them in their testing.

Finally, it’s time to execute the testing. Allow two weeks’ worth of time or longer, and then contact your testers for their feedback.

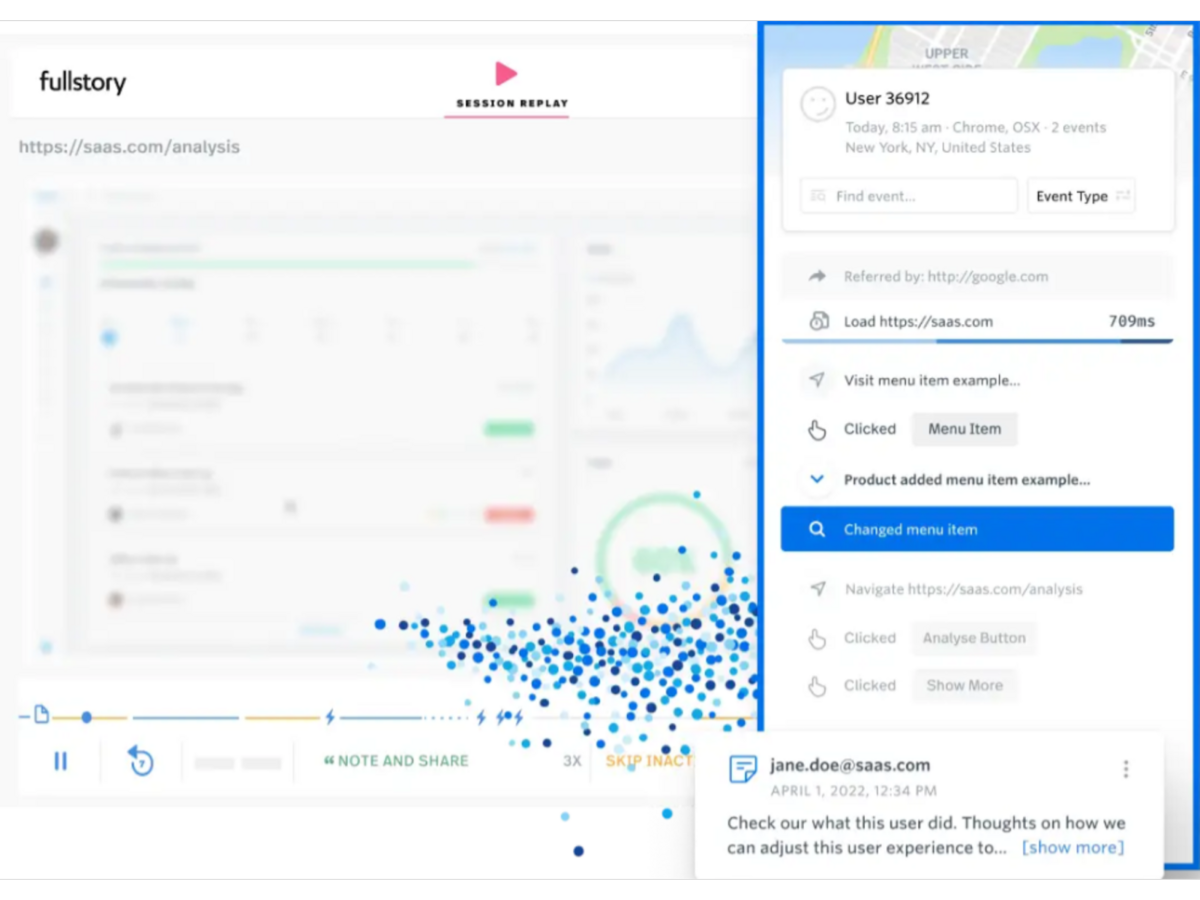

If you want in-depth insight into user testing, it’s also wise to invest in a tool such as FullStory.

This resource will record your users’ testing sessions, allowing you an unfiltered perspective of how the users navigated the app.

Here’s the tool in action:

The user’s entire journey is recorded with FullStory.

As such, you’ll view every aspect of their testing sessions and can observe precisely how they came across errors, significantly facilitating user acceptance testing.

Conclusion

Deploying your app straight after development, untested, isn’t the best idea—you can’t know if it’ll function as envisioned.

As such, you need mobile testing to ensure everything is working correctly.

However, don’t use the same testing formula for every app. Instead, create different strategies for different app types.

Furthermore, consider implementing the QAOps framework, as it should speed up software delivery.

Regarding testing types, performance, regression, and user acceptance testing are all essential and shouldn’t be neglected.

Keep these tips in mind, and your mobile app will have excellent chances of being released to the world quickly and seamlessly.